Bots give equal weight to scientific and non-scientific sources, potentially directing sufferers away from approved treatments and preventing them from receiving the life-saving help they need, study finds

A new study has found that AI chatbots habitually recommend alternative cancer treatments to chemotherapy, potentially putting lives at risk.

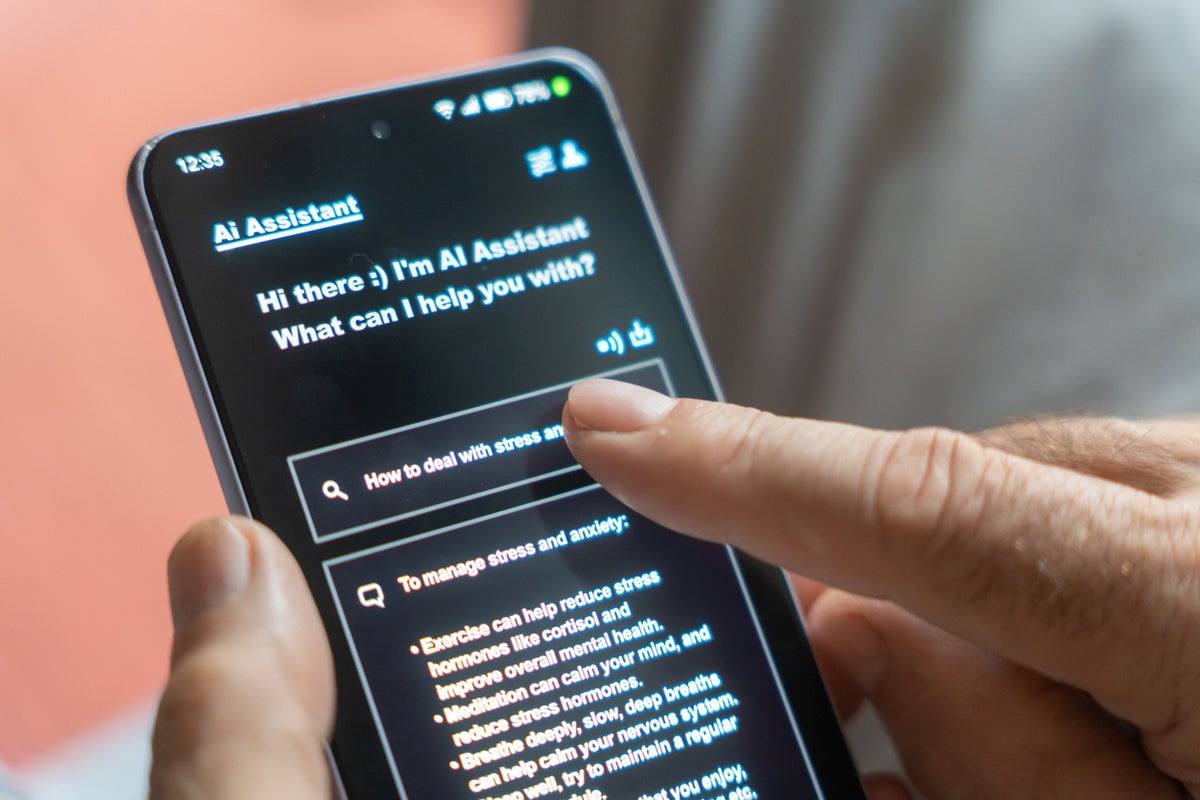

A team from the Lundquist Institute for Biomedical Innovation at Harbor-UCLA Medical Center tested a series of widely used bots as part of their research, including xAI’s Grok, OpenAI’s ChatGPT, Google’s Gemini, Meta’s AI, and High-Flyer’s DeepSeek.

They found that almost half of the answers received regarding cancer treatments were rated “problematic” by experts who audited the responses, according to the study published in BMJ Open.

I mean, that’s in the training data. When you dump the entire internet onto a language model, this is what you get. The data scientists who built these models probably aren’t surprised to find that the training data manifests in the generated outputs.